Gazette

Stories and news from across campus.

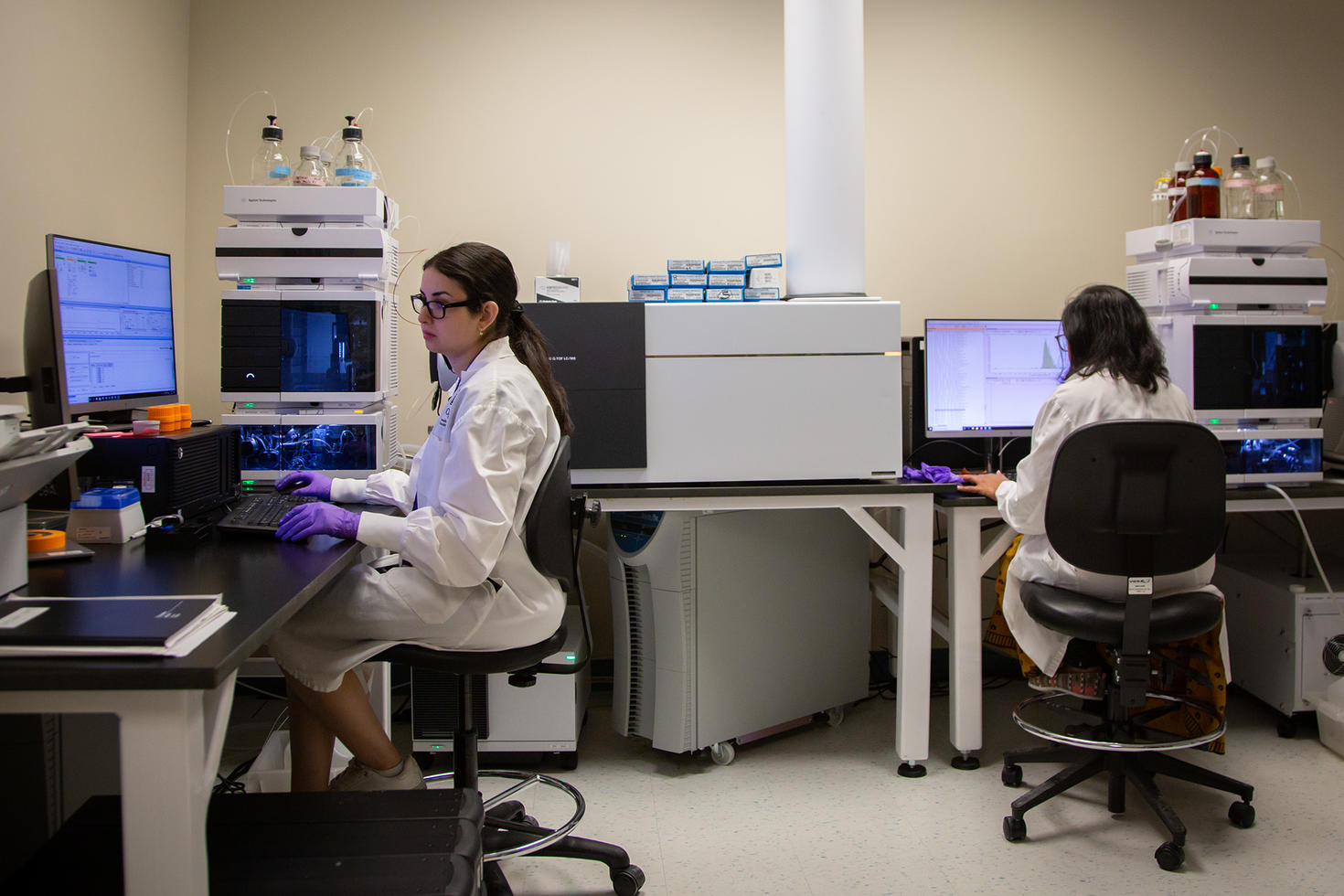

Ending the Drought: Ottawa’s Medical Research Community Welcomes Long-Awaited Wet Lab Facilities

Most people only think about their metabolism when their pants get too tight, blaming weight gain on their metabolism slowing down.

The University of Ottawa’s new Advanced Medical Research Centre will accelerate discovery and new treatments

The rapid development and deployment of vaccines during the COVID-19 pandemic highlighted the critical role of research in creating lifesaving medical…

If you build it, they will stay: Ending Ottawa’s biotech brain drain

Ottawa has earned its global reputation as a vibrant city for medical research and innovation. But if it wants to keep its intellectual capital in the…

Turning data into equity: How two researchers are reshaping the music industry and community services

What do country music charts and non-profit training have in common? At first glance, not much. But in the hands of uOttawa professors Jada Watson and…

Pushing quantum limits: A Canada–France collaboration on nonlocal boxes

What began as a master’s thesis has grown into a dynamic international collaboration that pushes the boundaries of quantum research. Led by Professor …

uOttawa Medicine scientists zero in on cellular mechanism fueling drug-resistant cancers

A Canada Research Chair's uOttawaMed lab unveils promising new insights underlying cancer treatment resistance, perhaps paving the way for enhancing t…

Engineering ultra-thin magnets to power next-gen electronics

A team of international researchers led by the University of Ottawa has made a breakthrough in the development of ultra-thin magnets—a discovery that …

How eulachon grease links generations, culture and cutting-edge science

In the coastal First Nation communities of British Columbia, the return of a small, silvery fish each spring marks much more than a seasonal cycle. Fo…

The University of Ottawa and Canadian Nuclear Laboratories accelerate low dose radiation research and foster next generation of scientists

University of Ottawa (uOttawa), one of Canada’s most innovative universities and the Canadian Nuclear Laboratories (CNL), Canada’s premier nuclear sci…

Aug 20

Parent Information Night in English

Are you a parent of a student considering applying to the University of Ottawa? Join us for an information session designed to help you understand wha…

Aug 21

Parent Information Night in French

Are you a parent of a student considering applying to the University of Ottawa? Join us for an information session designed to help you understand wha…

Arts District: A creative public space in the heart of a university and city of culture

The Faculty of Arts is a world bubbling with culture and ideas. Languages and literatures, humanities, fine and performing arts — all open the door to…

uOttawa grows Kanata North’s presence to meet rising innovation demands

New campus space at 350 Legget anchors research, innovation, and talent where the industry needs it most.

Incoming president Marie-Eve Sylvestre sees a world of possibilities

Marie-Eve Sylvestre is preparing to begin a new chapter at the University of Ottawa, as president and vice-chancellor. Her enthusiasm is contagious. R…

Get in the game. Get Gee-Gees tickets

Save 25% and get early access to the Rivalry Series, including the Panda Game — which draws a record crowd for university football in Canada — PLUS fo…

Intramurals are back again!

Socialize and stay healthy by playing your favourite sport. There’s soccer, basketball, dodgeball and so much more! Intramural sports are an ideal way…

What I wish I knew in first year

Current uOttawa students and alumni reflect on what they would tell their first-year selves if they could travel back in time. Check out their words o…

A look back on CCERBAL 2025 and Carrefour francophone

Two major events this spring featured several opportunities to quench your thirst for knowledge and to connect with peers.

Building the future of French-language education: A promising agreement between the universities of Regina and Ottawa

Over the years, too many young Saskatchewan francophones have seen their dreams of university studies in French come up against a tough choice: to lea…

Three OLBI professors get Learning Futures Fund support

OLBI is pleased to announce that projects by our professors Reza Farzi, Parvin Movassat and David Pratt will receive Faculty of Arts Learning Futures …

Photonics for health care: a new laser for biosensors

PhD student Shayan Saeidi is developing a compact, affordable biosensor that could improve how we detect health conditions in fluid samples.

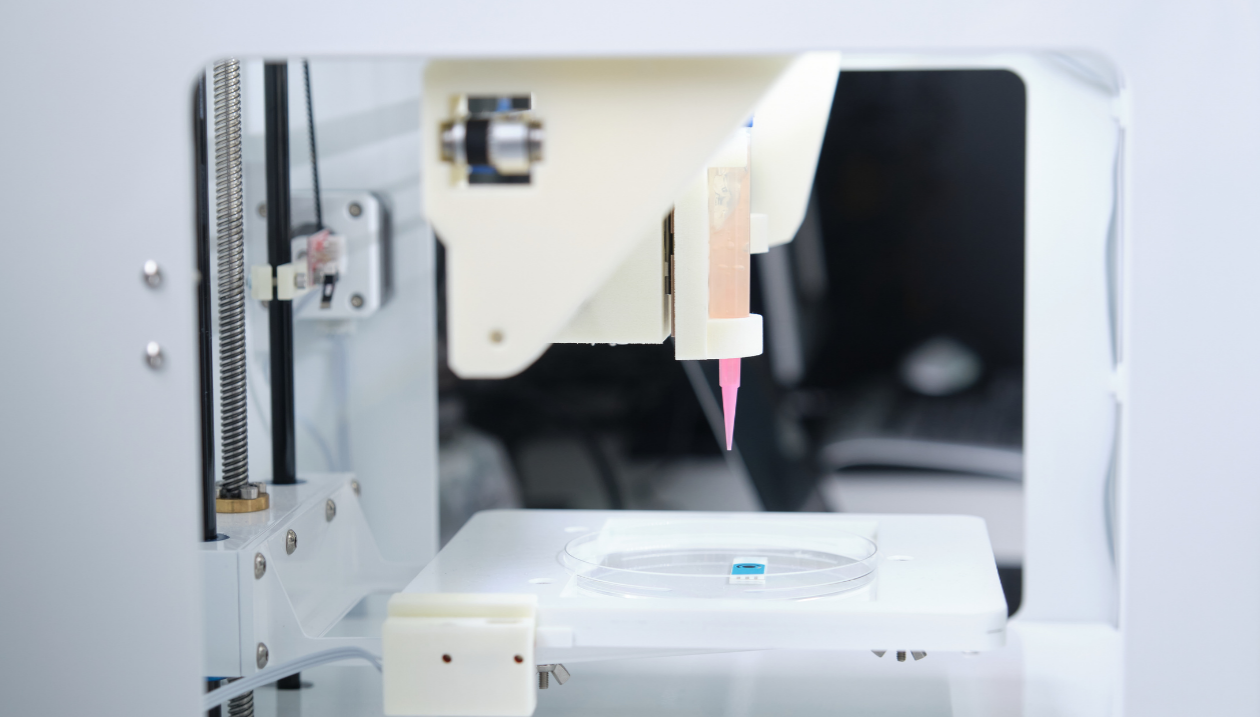

3D printing living tissue: from joint pain to osteoarthritis

Biomedical engineering student Olivia Steiner is developing smarter bioinks to improve 3D bioprinting for tissue engineering — with big implications f…

Partnership accelerating medical capacity in Tanzania

VR learning touted as a “new high” in knowledge sharing as uOttawa continues goal of improving medical care internationally.

Cracking the code behind DNA repair and disease

Polanyi Prize winner Hossein Davarinejad maps the molecular signals involved in gene regulation and DNA repair. His work is contributing to new insigh…

Professor Shiva Nejati bridges theory and practice to improve software reliability

Professor Shiva Nejati, a software engineering researcher at the University of Ottawa, is collaborating with industry leaders like BlackBerry to make …

Powering your phone with a laser

uOttawa researchers demonstrate new laser power converters to transmit power to further, remote destinations.

The hidden costs of being a woman at work

As a renowned professor of psychology in the industrial-organizational program at Pennsylvania State University, Dr. Grandey took the stage at the Tel…

Entrepreneurial women: Olga Koppel’s quest for cleaner cities

“Pivoting is the one constant in starting a business.” That’s how Olga Koppel describes her journey from academia to entrepreneurship.

CEO Magazine ranks Telfer the #1 global executive MBA and a tier one MBA

Ottawa, ON – The Telfer School of Management proudly announces that its graduate programs have once again secured top recognition in the 2025 CEO Maga…

Guadalupe Escalante Rengifo: Teaching that connects and transforms

For Professor Guadalupe Escalante Rengifo, teaching is not a one-way transfer of knowledge — it’s a dynamic, reciprocal act of learning.

What a study about prison theatre reveals about rehabilitation

In a makeshift theatre inside a federal prison on Vancouver Island, incarcerated men adjust lights, paint backdrops and rehearse lines.

Theatrical innovation with sensory immersion as a creative force

Anne-Marie Ouellet, an associate professor in the Department of Theatre, is exploring an innovative approach to playwriting. Rather than starting from…

Professor Pascale Fournier leads a new partnership addressing the Indian Act’s legacy of discrimination

For over a century, the Indian Act has defined – and too often denied – Indigenous identity. A bold new research partnership is taking aim at this leg…

Strengthening trust through Indigenous engagement

Before engaging with community, we need to have something to offer. That’s how Tareyn Johnson, director of Indigenous affairs, explains the thinking b…

Spotlight on ageism in the workplace

As a long-ignored problem, ageism would rarely ever come up in conversations about workplace equity. But the tide is turning. Since the 2021 publicati…

Faculty news

Contact us

Gazette news

Tabaret Hall

550 Cumberland Street, room M284

Ottawa ON K1N 6N5

Canada

Tel: 613-562-5800 extension 5708

Fax: 613-562-5117

[email protected]

Submit your story

Have ideas for story? Want to get your initiative out there? Reach out to our team to submit your story at [email protected].